Imagine being able to reduce the power consumption of deep learning chips by several orders of magnitude. We did this 15 years ago.

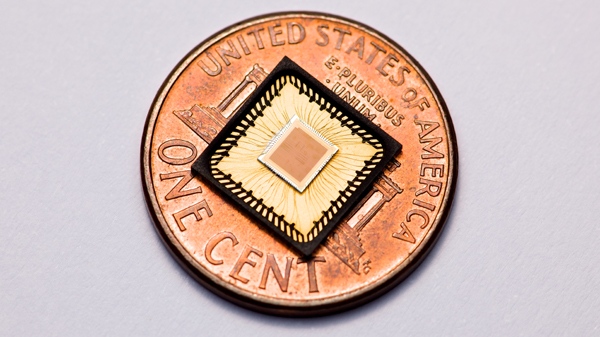

From 2007 to 2011, my startup Lyric Semiconductor, Inc., funded by a combination of DARPA and venture capital, created the first (and so far the only) commercial analog deep learning processor. 440,000 analog transistors did the work of a 30,000,000 digital transistors, providing 10x better Joules/Ops power compared to a digital tensor processing core. This analog tensor processing core was designed as a “plug and play” IP block for use within our digital deep learning microchips. (See this post about our overall deep learning processor architecture.)

We published in ACM1. We also patented early explorations at MIT2, the analog computing unit architecture3, analog storage4, factor/tensor operations5, error reduction of analog processing circuits6, I/O7, and research on stochastic spiking circuits8. We used the terms “factor” and “tensors” interchangeably9.

Our startup was acquired by ADI, the largest analog/mixed-signal semiconductor company in the US, and became the new machine learning/AI chipset division10. Our work also inspired further DARPA work on analog computing11.

There was also significant press coverage: Wired12, The Register13, Reuters14, The Flash Memory Summit15, Phys Org16, The Bulletin17, Chip Estimate18, KD Nuggets19.

The main innovation involved taking advantage of the fact that weights and activations in deep learning models can be represented by (quantized into) 7-bit numbers without causing problems.

The noise we observed in our circuits, was about 128th of our 1.8V power supply, so we could replace an 8-wire digital bus with a single wire carrying analog current. (In practice we used a differential pair – 2 wires – to represent our analog value more robustly.)

Dropping from eight wires to two wires doesn’t seem like a big enough win to justify the effort of designing analog tensor processors? Furthermore, the win gets even slimmer if you have decided you can quantize your weights and activations down to two bits. In that case we simply replaced two digital wires with two analog wires! Definitely not worth the trouble! So why bother?

The real win (about 10x in ops per Joule) came from two things:

- You get to use fewer transistors in the multiply-and-add. The main kind of math that deep learning processors need to do is multiplication and addition. Instead of a few thousand transistors needed to multiply two 8-bit digital numbers, we could use just 6 transistors to multiply two analog numbers. 500x fewer!

- Less intense switching is low power. On average our analog wires were not switching between 0 and 1, they were varying between intermediate current values.

In the digital version, switching our wires from 1.8V to 0V and back again dissipates the majority of power in our processor. On any given digital wire, this happens about half of the times that the processor’s clock ticks.

By contrast, in the analog version, because of the statistical distribution of weights and activations around 0, on average the currents in our analog wires did not change as widely nor abruptly.

Would all of this still work in a modern 1nm semiconductor chips? One would have to try it to find out for certain, but it’s fairly likely to still work. If the noise floor in chips have not changed very significantly, then dropping the power supply from 1.8V to 0.5V would still provide us with 5 bits of analog resolution to represent weights and activations. 5-bits should still be adequate for today’s deep learning quantization.

In 2010, a small percentage of the world’s computing workloads involved deep learning. Today deep learning work loads are becoming a driver for global energy consumption. There is talk of AI (deep learning) data centers consuming the equivalent of dozens of Manhattans of electricity. A 10x efficiency win matters even more today than it did then!

- Low power logic for statistical inference, Vigoda, Benjamin and Reynolds, David and Bernstein, Jeffrey and Weber, Theophane and Bradley, Bill, Association for Computing Machinery, 2010. ↩︎

- Analog Continuous Time Statistical Processing, Vigoda, Benjamin and Gershenfeld, Neil, Massachusetts Institute of Technology, issued December 28, 2010. US Patent 7,860,687 B2. ↩︎

- Belief Propagation Processor, Reynolds, David and Vigoda, Benjamin, Mitsubishi Electric Research Laboratories / Analog Devices, Inc., issued August 5, 2014. US Patent 8,799,346 B2. ↩︎

- Storage Devices with Soft Processing, Vigoda, Benjamin and Bernstein, Jeffrey and Venuti, Jeffrey and Alexeyev, Alexander and Nestler, Eric and Reynolds, David and Bradley, William and Zlatkovic, Vladimir, Analog Devices, Inc., US Patent 9,036,420 B2, issued May 19, 2015. ↩︎

- Programmable Probability Processing, Bernstein, Jeffrey and Vigoda, Benjamin and Nanda, Kartik and Chaturvedi, Rishi and Hossack, David and Peet, William and Schweitzer, Andrew and Caputo, Timothy, Analog Devices, Inc., issued February 7, 2017. US Patent 9,563,851 B2. ↩︎

- Apparatus and Method for Reducing Errors in Analog Circuits While Processing Signals, Vigoda, Benjamin, Mitsubishi Electric Research Laboratories, Inc., issued August 31, 2010. US Patent 7,788,312 B2. ↩︎

- Signal Mapping, Vigoda, Benjamin and Bernstein, Jeffrey and Alexeyev, Alexander and Venuti, Jeffrey, Lyric Semiconductor, Inc. / Analog Devices, Inc., published November 4, 2010. US Patent Application 20100281089 A1 (granted as US8,572,144 B2). ↩︎

- Mixed Signal Stochastic Belief Propagation, Bernstein, Jeffrey and Vigoda, Benjamin and Reynolds, David and Alexeyev, Alexander and Bradley, William, Analog Devices, Inc., issued July 29, 2014. US Patent 8,792,602 B2. ↩︎

- In a factor graph computing belief propagation, we could have, for example, a softAND gate with incident edges A, B, C. Logically, C = AND(A,B), which yields the tensor or “factor” computation p_C = \sum_{A,B,C} \delta(C-AND(A,B)) p_A p_B.

Accelerating Inference: towards a full Language, Compiler and Hardware stack, Hershey, Shawn and Bernstein, Jeffrey and Bradley, Bill and Schweitzer, Andrew and Stein, Noah and Weber, Théophane and Vigoda, Benjamin, NIPS Workshop on Probabilistic Programming, December 12, 2012. (arXiv:1212.2991) ↩︎ - ADI buys Lyric — probability processing specialist, Reynolds, Dave and Vigoda, Ben, EE Times, June 13, 2011. 11–12. Wired articles — need direct fetch to confirm titles/authors/dates precisely. ↩︎

- Upside, Wired, August 2012. ↩︎

- Probabilistic Chip Promises Better Flash Memory, Spam Filtering, Wired, August 2010. ↩︎

- DARPA Funds Mr Spock on a Chip, The Register, August 17, 2010. ↩︎

- The Odds Are Good That Lyric Semiconductor Will Change Computing, Reuters, August 2010. ↩︎

- LDPC Error Correction Using Probability Processing Circuits, Vigoda, Benjamin, Flash Memory Summit, Session 201, August 19, 2010. ↩︎

- Computer Chip That Computes Probabilities and Not Logic, Phys.org, August 19, 2010. ↩︎

- A Chip That Calculates the Odds, Vance, Ashlee, Bend Bulletin (via New York Times), August 18, 2010. ↩︎

- MIT Spin-Out Lyric Semiconductor Launches a New Kind of Computing With Probability Processing Circuits, ChipEstimate, August 2010. ↩︎

- A Chip That Digests Data and Calculates the Odds, Vance, Ashlee, New York Times (via KDnuggets), August 17, 2010. ↩︎